Why AI models still cannot tell which instructions to trust

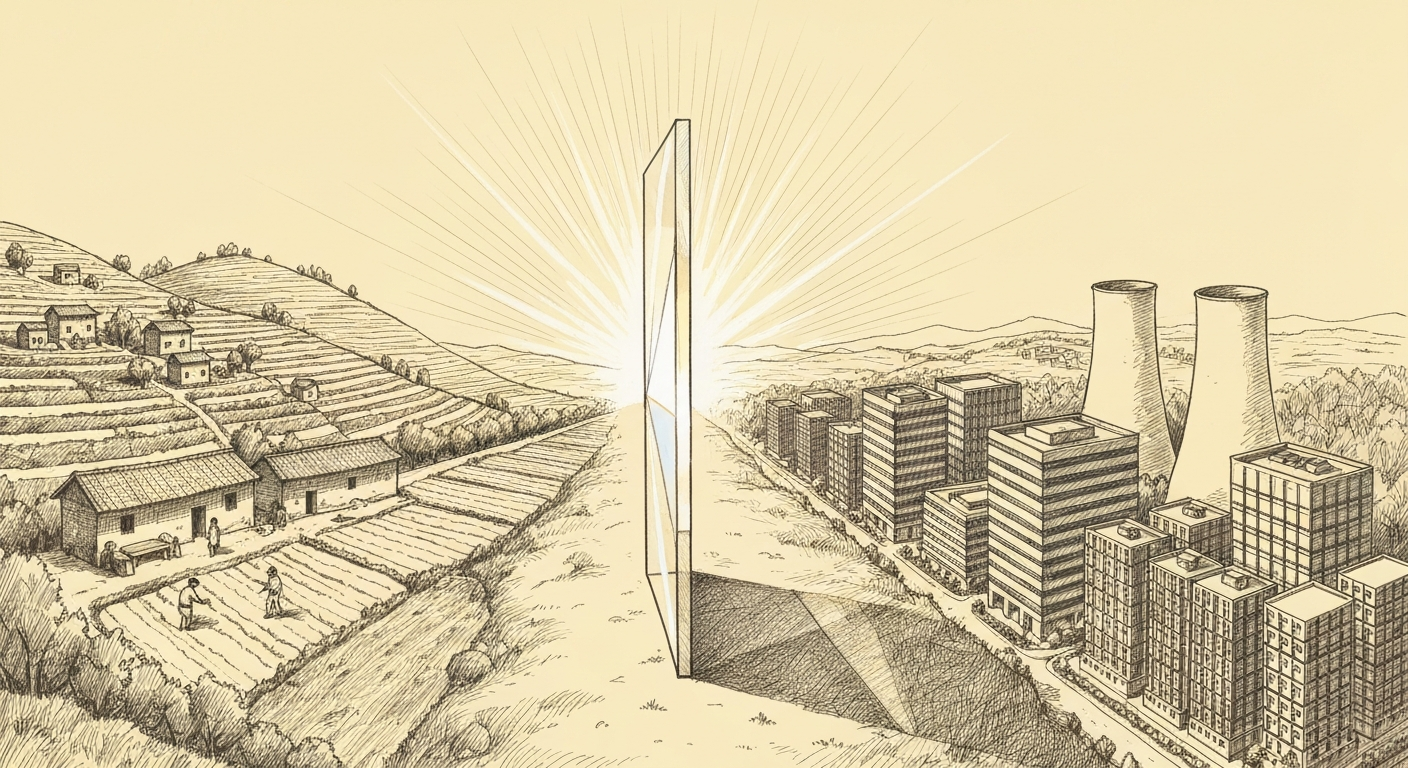

AI systems face a foundational vulnerability: they cannot reliably distinguish trusted instructions from malicious ones. OpenAI's instruction hierarchy training is a first step, but the company's own evolution toward reasoning-based approaches suggests training data alone may not be enough.

*OpenAI published a training methodology in April 2024 addressing this exact failure. It targets the inability of AI models to distinguish between instructions to trust and instructions to ignore. The paper, arXiv:2404.13208 (a preprint, not yet peer-reviewed), proposes a three-tier privilege system and training methods to enforce it. Two years...

Create an account to read this article

Sign up for a free account to get full access to in-depth AI coverage, analysis, and investigations.