AI facial recognition error kept innocent grandmother jailed for nearly six months

A Tennessee woman spent 163 days behind bars after a Fargo police detective used AI facial recognition to identify her as a bank fraud suspect — despite bank records placing her 1,200 miles from the crime. The case exposes what experts call a systemic failure: deploying AI identification tools without basic verification steps.

What Happened

Angela Lipps, a grandmother from Tennessee, was arrested at gunpoint on July 14 by a U.S. Marshals team while babysitting four children at her home. Fargo police had used AI facial recognition software to match her photo — drawn from social media and her driver's license — to surveillance footage of a suspect in a North Dakota bank fraud case.

She was held without bail for nearly four months in a Tennessee jail before being transferred to North Dakota, totaling roughly 163 days of incarceration. The charges were dismissed on December 24 after bank records and financial evidence confirmed she had been in Tennessee, 1,200 miles away, at the time of the alleged crime.

According to court documents reviewed by InForum, no detective from the Fargo Police Department called to question Lipps before or after her arrest. During her incarceration, she lost her home, car, and dog. The FBI and U.S. Marshals were involved in the arrest operation.

Why It Matters

The Lipps case is not an isolated incident. The Innocence Project has documented at least seven confirmed wrongful arrests linked to facial recognition misidentification in the United States, and six of those seven cases involve Black individuals — a pattern that tracks with research showing facial recognition algorithms perform significantly less accurately on darker skin tones and on women.

The technical limitation at the core of this case is well-documented. Former Detroit Police Chief James Craig stated publicly that facial recognition used on its own "would yield misidentifications 96% of the time." That figure makes the tool unusable as standalone evidence — yet in the Lipps case, the facial match appears to have driven the arrest without independent corroboration.

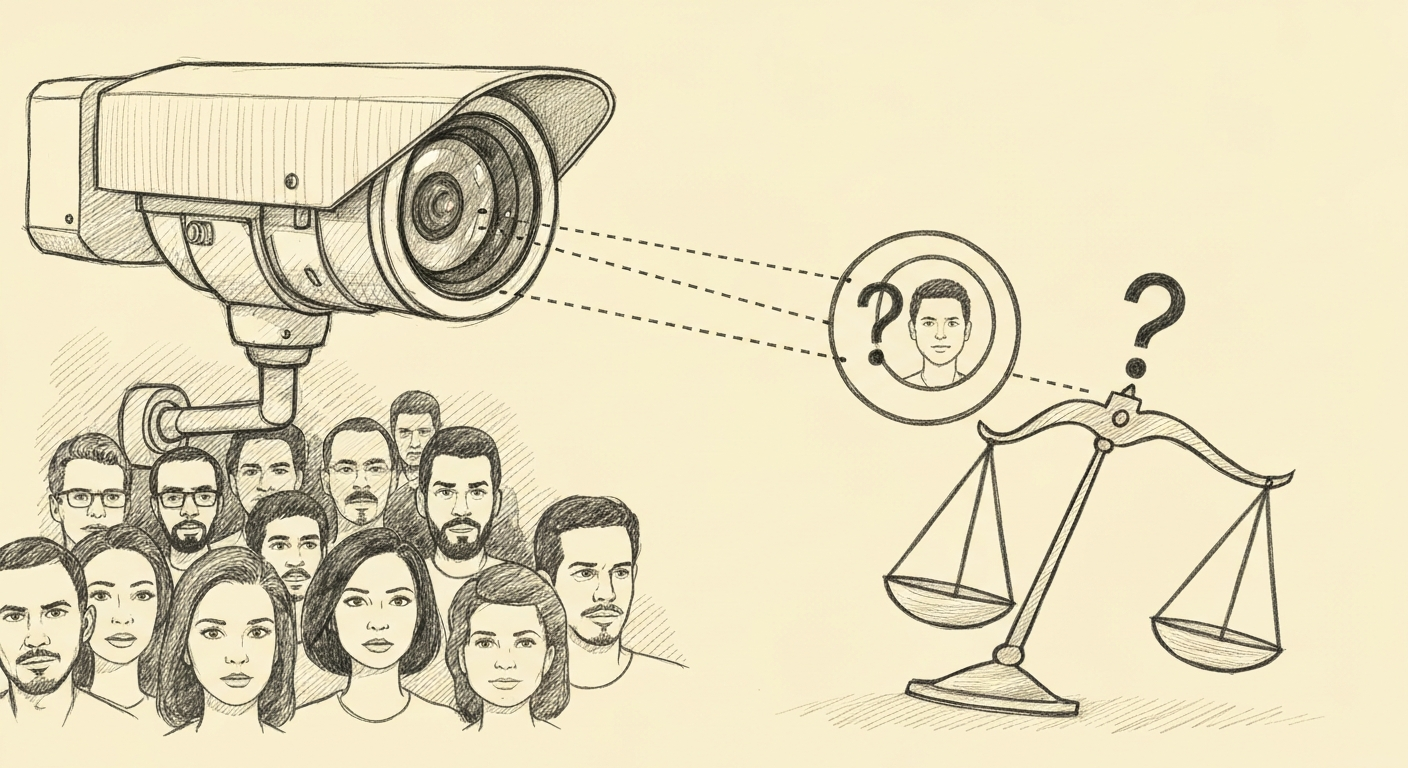

Facial recognition technology (software that compares a face in an image against a database of known individuals) is increasingly used by law enforcement agencies across the United States, often with minimal regulatory oversight and no federal standard for how strong a match must be before police can act on it. The absence of a required verification step — such as a direct interview, financial record check, or alibi inquiry before arrest — allowed a false positive to become a months-long imprisonment.

What makes this case significant beyond the individual harm is what it reveals about process: the bank records that exonerated Lipps existed before her arrest. The evidence was accessible. The system failed not because AI made an error — errors are expected and documented — but because no human checkpoint was required to catch it.

As AI tools become more embedded in law enforcement workflows, policymakers and police departments may face increasing pressure to define mandatory corroboration standards before AI-generated identifications can authorize an arrest.

Sources

- T1InForumnews

- T1

- T1

- T2

Stay informed. The best AI coverage, delivered weekly.