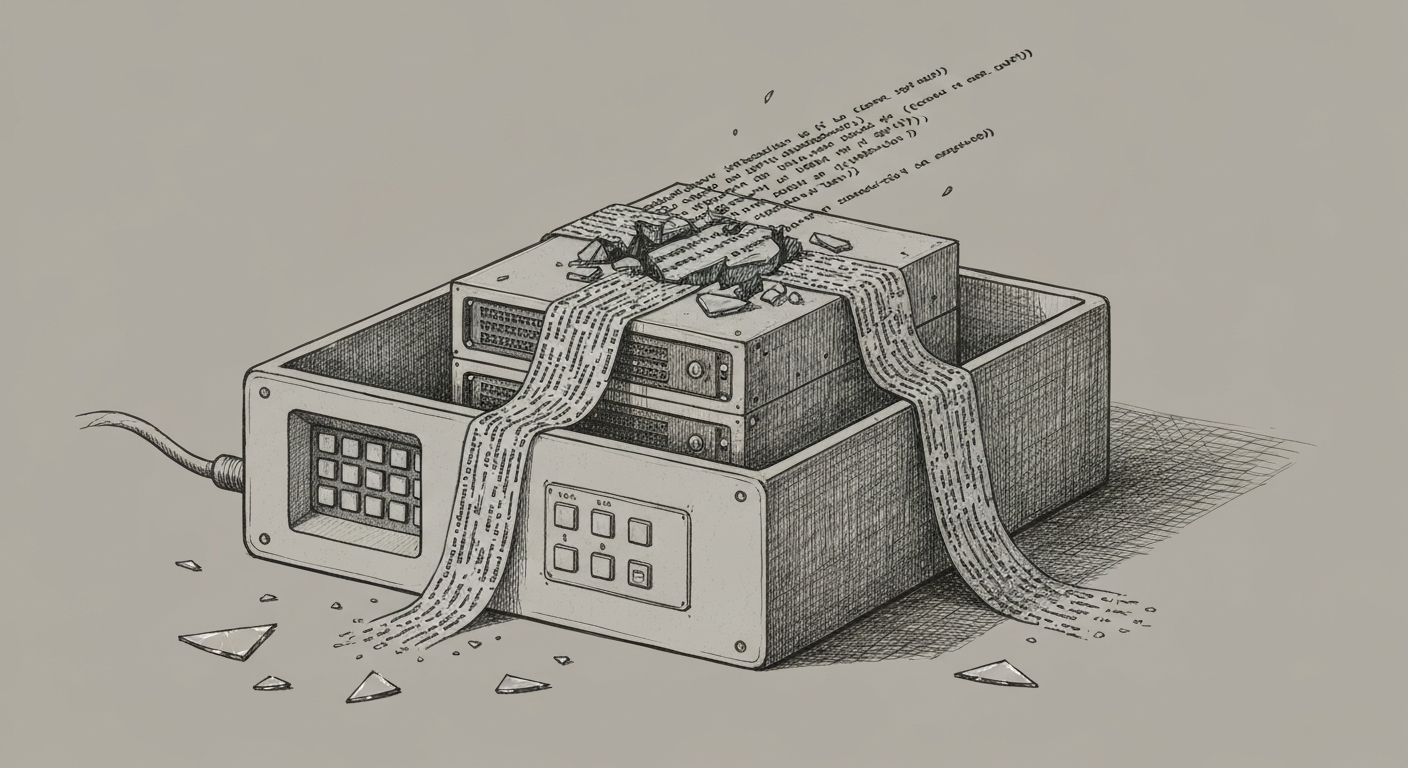

Snowflake's Cortex AI Agent Executed Malicious Code Through a Boundary That Was Supposed to Stop It

A Snowflake Cortex AI agent asked to review a GitHub repository did exactly that — and in the process, executed code it was never supposed to run. According to Willison's reporting, PromptArmor embedded a prompt injection attack in a repository's README file. When a Cortex user directed the agent to...

Create an account to read this article

Sign up for a free account to get full access to in-depth AI coverage, analysis, and investigations.